Doom Loops

17 March 2026

A slightly indulgent post this one, but I promise there's some design-and-AI reflection in the second half.

So, a few weeks ago I developed tinnitus in my left ear (blame a lifetime of loud music, or perhaps an ear infection). It's pretty annoying but I'm getting used to it.

Anyway, in an attempt to find some agency in the situation I decided to make a little noise app that I could use to mask the sound at night.

This blog post is about where that all led.

Hey, what's that noise? #

There's a corner of YouTube dedicated to 10hr Brown Noise and Spaceship Cabin Sounds videos. I like all that stuff, but I’ve always thought it would be nice to be able to roll my own custom soundscapes.

So I got to work, with Google Antigravity assisting with the coding. I say assisting - it was pure vibe coding. The AI was doing 95% of the dev work whilst I acted as tyrannical product / design lead, feeding it prompts and mock-ups made in Google Slides.

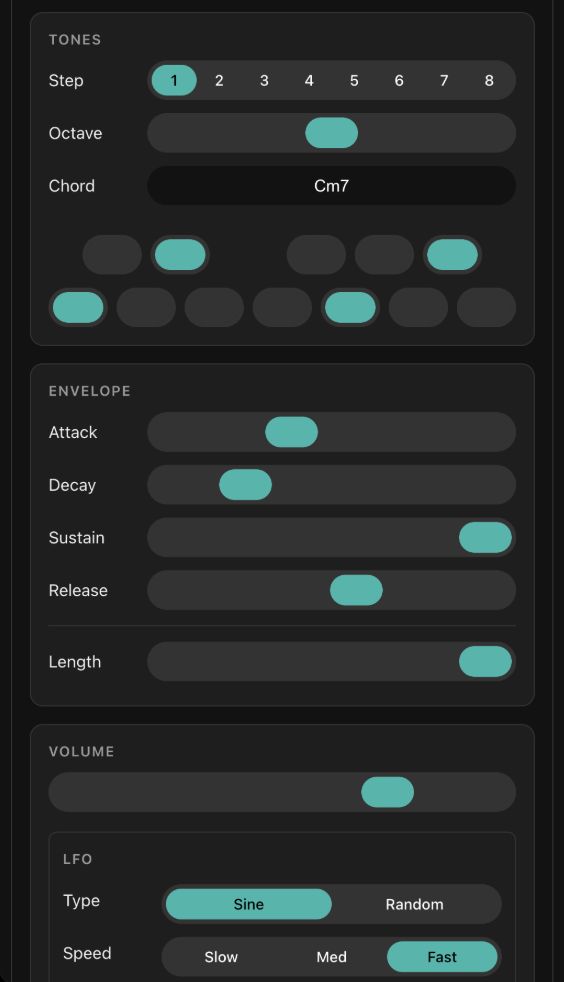

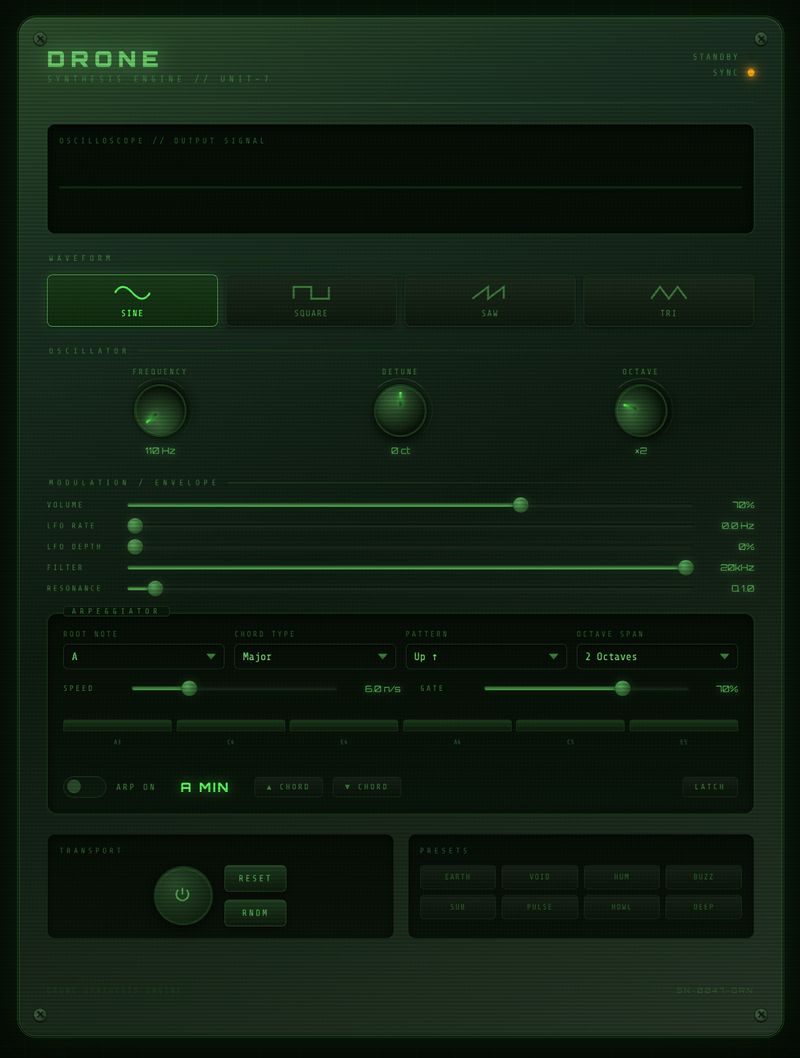

And well, pretty soon things got out of hand. Because the other thing I like is ambient music - particularly the darker, dronier end of the pool. And if I’m making a noise app I may as well chuck in some oscillators and an LFO right?

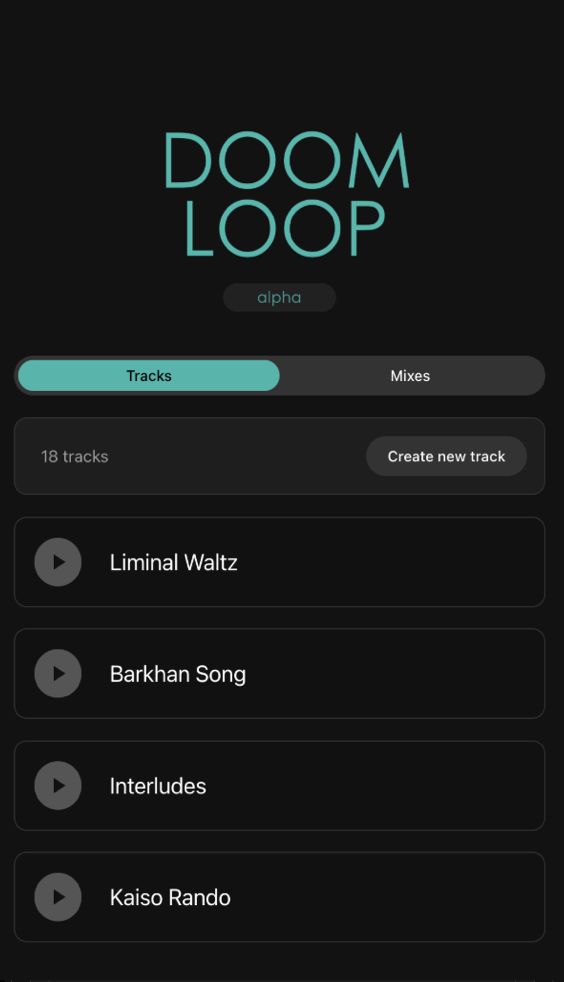

By the end of a few sessions we’d built a simple little ambient synth/sequencer. I named it Doom Loop and stuck it here: doomloop.cc

Here's a little 4 minute mix of a few tracks I made with it:

Feel free to have a play. It stores everything on your device so only you have access to your tracks and mixes - but it does have file import/export, so you can share things if you want.

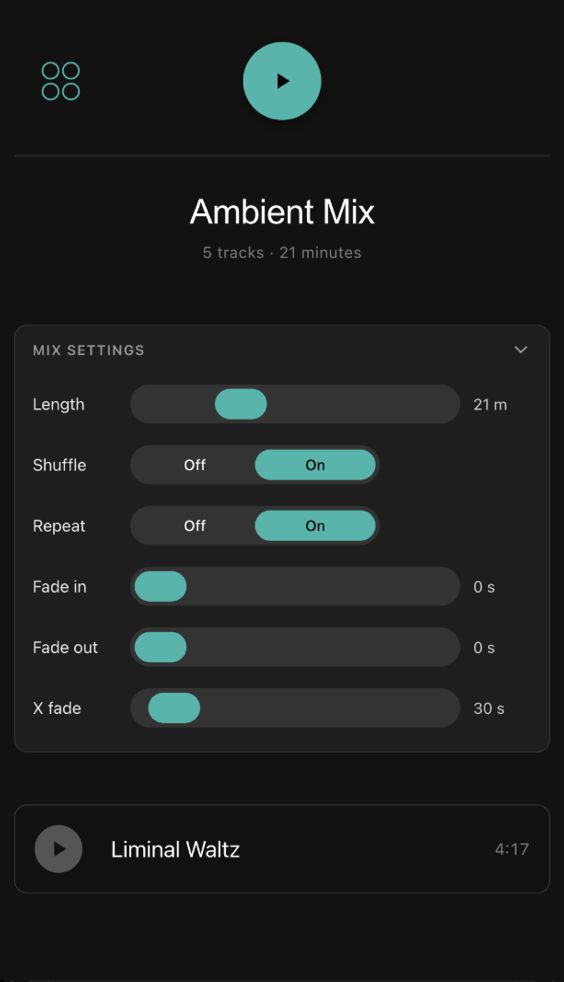

It's very much optimised for ambient. There’s no global tempo. Notes and tracks stretch to fill the space you give them. Tracks will loop and evolve endlessly if you want. Everything gets its own little sequencer and they can all be running at different speeds.

Tracks have no specified length - they play forever. When you create a mix you set the overall mix length and then the tracks are distributed equally in time across it. Crossfades between tracks in a mix can be up to 10 minutes long, which would be crazy for most music, but perfect for creating slowly emerging movements in a longer suite. You can randomly vary chords, notes, filters, volume etc. or modulate them in cycles of up to an hour.

All of this means you can create tracks that are subtly different each time you listen to them. I’ve been a little obsessed with that idea - the tyranny of recorded media is that it’s exactly the same every time you hear it, we deserve music that sounds different each time.

It’s really basic right now, barely an alpha really. I’m scratching an itch that I don’t know if anyone else has, so I don’t want to sink too much time into it.

Some reflections #

OK I promised some AI and design related content - here are a few reflections on the experience of developing the app this way.

AI tools > AI artefacts #

Doom Loop is an example of using AI to build a creative tool rather than directly producing a creative artefact. I've been discussing this idea with my colleague Nick Ritchie recently - I think we're both finding it more satisfying to use AI in this way.

For a start it leaves you much more in control of the creative process. And once you have the tool you don't need more AI to create more outputs. It also feels less directly exploitative and more likely to generate genuinely weird and unpredictable results.

We've been creating little tools like this to explore the potential of the idea - here are some examples.

There's one that makes endless abstract landscapes, one that does random rotatable 3D blocks and this one that Nick made, that we ended up using to generate parts of the Incubator for AI visual identity.

Interfaces make AI legible #

I mentioned earlier that I'd added a simple import/export feature to so I could easily share tracks and mixes as JSON files.

It worked, but it also did something else much more significant: it exposed the internal data structures of the app. Now you don’t need the interface to create tracks and mixes, You can directly edit the JSON.

And if you can do it, so can an AI...

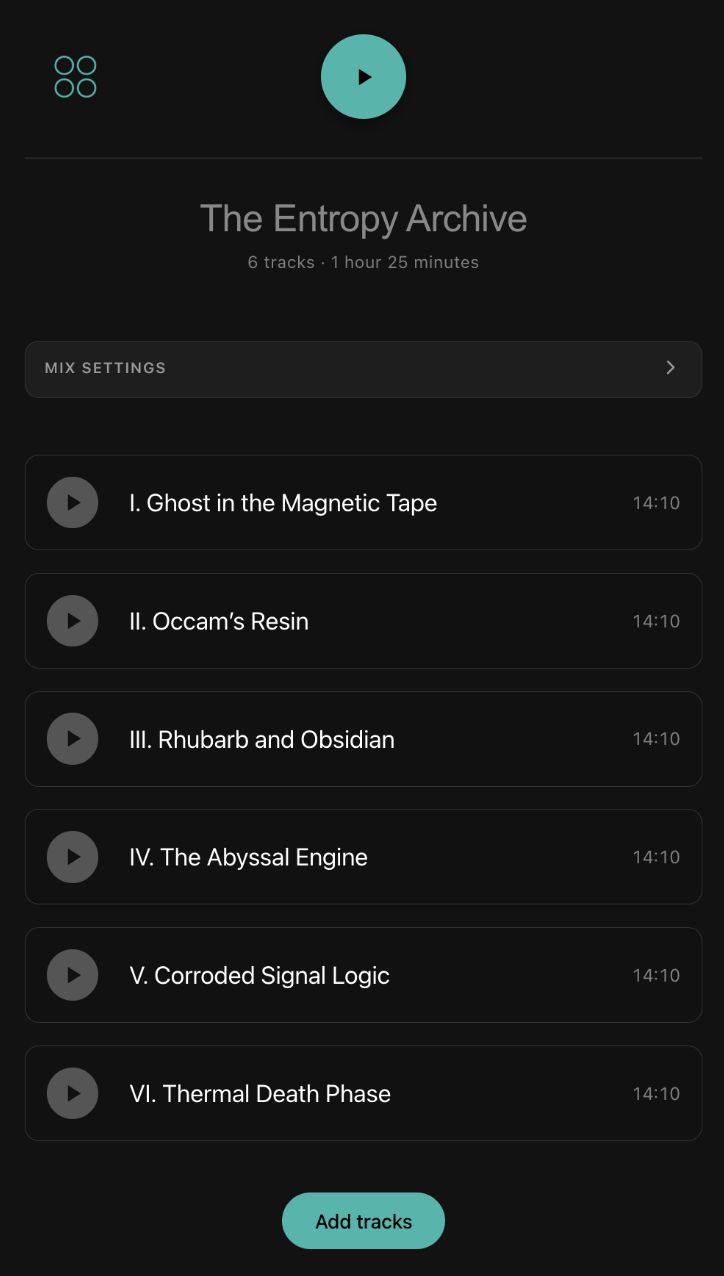

So I tried pointing Gemini at the repo for reference and asked it to generate me the JSON file for an hour long ambient suite in the style of Eliane Radigue, William Basinski, Iron Cthulhu Apocalypse or Aphex Twin.

And well... it really tried! It certainly talked the talk. The track names and descriptions were almost comically on point (see image above). The tracks themselves were… fine. I was pretty impressed that it was able to generate any sound at all, and in most cases you could tell what it was aiming for. When I asked it for a second, more melodic mix, it was able to meet that brief.

Here are the two JSON files - you can import them into Doom Loop and listen for yourself:

What I really like about using AI in this way is again, that you retain full control and a kind of observability. You can open the box and see inside, and tweak all of the parameters. The track and sound names give hints as to what the AI was aiming for.

It opens up space for a much more collaborative experience. Far removed from the ‘Hey Claude, make me an X’ approach that can leave you feeling like a lab rat pushing a lever for another pellet.

It made me reflect that good user interfaces make AI's decisions legible, and also provide ways for humans to reach in and adjust things - we should provide them by default.

I asked Gemini to comment on some early notes for this post. It pointed out that the codebase of the software is acting as one giant prompt for the AI - telling it what aspects of the sonic world I care about, the parameters it can vary and by how much.

The ghost in the machine #

It can be very tempting to anthropomorphise AI. When Gemini created that ambient suite my initial reaction was to direct my sense of wonder towards Gemini itself, or perhaps to the engineers who created it.

But I'm consciously trying to change the way I relate to AI when I use it now. Using it should feel like a celebration of all of the human experience and ingenuity that went into it, especially that of the people whose work ended up in the training data.

Steam engine time! #

Half way through the project I saw that Sarah Drummond had worked up a similar concept into this amazing thing. It's way fancier looking than mine. Clearly, it's Steam Engine Time for vibe-coded drone apps.

I asked Sarah and another friend Martin if they'd playtest the app and offer a bit of feedback. They immediately spotted some glaring usability fails which I was able to address. I didn't need it, but it was good to be reminded of the risks of prolonged, solo product development. You are not your user, even when you are.

Which brings me finally to...

This is not public service design #

I feel I always end these more whimsical posts with a big caveat, and this is no exception. What I was doing here is emphatically NOT the same as designing public services.

This was solo tinkering on a product. Low stakes, no other users, no constraints other than my own time. It could not be more different to the high stakes, wicked problem solving world of designing government services.

Whilst I do think there are practices we could borrow from the world of AI-assisted prototyping and development, we need to be really careful not to confuse these domains.

In a public sector context, design is a social activity as much as anything. It's about building consensus, making decisions together and solving big problems that are typically not solely product-shaped. You don't get to pivot to a different idea if it fails or you get bored.

Thanks #

And that’s it so far. There’s plenty more I’d like to do. Adding new sound types. Adding new LFO types. Adding LFOs to EVERYTHING. Arpeggios. A community section so users could share tracks and mixes (if it HAD any users).

Thanks again to Sarah Drummond and Martin Cadwallader for feedback and encouragement, and to Nick Ritchie and the rest of the amazing UCD team at the Incubator for AI for being so up for discussing and exploring ideas like these.